Kubernetes排障指南

“主要介绍了pod和flannel常见异常的排查步骤及方法

1)pod故障排查

一般情况下,问题出在pod本身,我们可以按照如下步骤进行分析定位问题

- kubectl get pod 查看是否存在不正常的pod

- journalctl -u kubelet -f 查看kubelet,是否存在异常日志

- kubectl logs pod/xxxxx -n kube-system

2)示例排查 CrashLoopBackOff和OOMkilled异常

1 查看节点运行情况

[root@k8s-m1 src]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-c1 Ready <none> 16h v1.14.2

k8s-m1 Ready master 17h v1.14.2

2 首先查看pod状态是否正常

[root@k8s-m1 docker]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-fb8b8dccf-5g2cx 1/1 Running 0 2d14h

coredns-fb8b8dccf-c5skq 1/1 Running 0 2d14h

etcd-k8s-master 1/1 Running 0 2d14h

kube-apiserver-k8s-master 1/1 Running 0 2d14h

kube-controller-manager-k8s-master 1/1 Running 0 2d14h

kube-flannel-ds-arm64-7cr2b 0/1 CrashLoopBackOff 629 2d12h

kube-flannel-ds-arm64-hnsrv 0/1 CrashLoopBackOff 4 2d12h

kube-proxy-ldw8m 1/1 Running 0 2d14h

kube-proxy-xkfdw 1/1 Running 0 2d14h

kube-scheduler-k8s-master 1/1 Running 0 2d14h

发现网络插件kube-flannel一直在尝试重启,有时能够正常,有时提示 CrashLoopBackOff有时OOMKilled

3 查看kublet日志

[root@k8s-m1 src]# journalctl -u kubelet -f

12月 09 09:12:45 k8s-m1 kubelet[35667]: E1209 09:12:45.895575 35667 pod_workers.go:190] Error syncing pod 2eaa8ef9-1822-11ea-a1d9-70fd45ac3f1f ("kube-flannel-ds-arm64-7cr2b_kube-system(2eaa8ef9-1822-11ea-a1d9-70fd45ac3f1f)"), skipping: failed to "StartContainer" for "kube-flannel" with CrashLoopBackOff: "Back-off 5m0s restarting failed container=kube-flannel pod=kube-flannel-ds-arm64-7cr2b_kube-system(2eaa8ef9-1822-11ea-a1d9-70fd45ac3f1f)"

4 查看网路插件kube-flannel的日志

[root@k8s-m1 src]# kubectl logs kube-flannel-ds-arm64-88rjz -n kube-system

E1209 01:20:42.527856 1 iptables.go:115] Failed to ensure iptables rules: Error checking rule existence: failed to check rule existence: running [/sbin/iptables -t nat -C POSTROUTING ! -s 10.244.0.0/16 -d 10.244.0.0/16 -j MASQUERADE --random-fully --wait]: exit status -1:

E1209 01:20:46.928502 1 iptables.go:115] Failed to ensure iptables rules: Error checking rule existence: failed to check rule existence: running [/sbin/iptables -t filter -C FORWARD -s 10.244.0.0/16 -j ACCEPT --wait]: exit status -1:

E1209 01:20:52.128049 1 iptables.go:115] Failed to ensure iptables rules: Error checking rule existence: failed to check rule existence: running [/sbin/iptables -t filter -C FORWARD -s 10.244.0.0/16 -j ACCEPT --wait]: exit status -1:

E1209 01:20:52.932263 1 iptables.go:115] Failed to ensure iptables rules: Error checking rule existence: failed to check rule existence: fork/exec /sbin/iptables: cannot allocate memory

刚开始一直怀疑是iptables问题,当我尝试把iptables.go中执行命令拷贝到命令行之后可以正常执行,这个时候就不知所以然了,直到我发现有时pod会提示;

kube-flannel-ds-arm64-hnsrv 0/1 OOMKilled 4 2d12h

3)ImagePullBackOff 异常解决

- 镜像名称无效-例如,你拼错了名称,或者 image 不存在

- 你为 image 指定了不存在的标签

4)网络插件kube-flannel无法启动问题

一般情况下是因为网络插件flannel下载问题,默认的网络插件下载地址是quay.io/coreos/flannel,但是这个地址国内网络无法直接访问到,这个时候我们需要从quay-mirror.qiniu.com/coreos/flannel地址下载,然后重命名城quay.io,然后执行

kubectl create -f kube-flannel.yml5)子节点无法加入问题

主节点一切安装成功,并且提示子节点加入命令,当输入到子节点时发现无法加入,或者一直卡在加入shell命令行界面,无法加入。

第一:先看防火墙 systemctl firewalld.service status 因为集群间需要组网通信,如果防火墙是打开的建议关闭或者加入到iptables里面。默认可以访问。

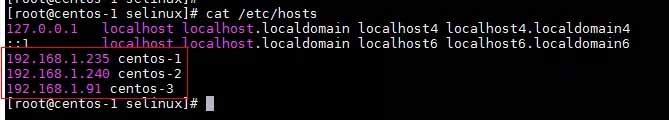

第二:查看自己是否配置host组件

1.执行cat /etc/hosts命令,修改hosts文件。

2.添加集群所有节点的IP及hostname信息

3.hostnamectl --static set-hostname centos-1依次执行

如果还是没有解决则需要根据节点日志,具体问题具体分析解决。

6)OCI runtime create failed

12月 09 08:56:41 k8s-client1 kubelet[39382]: E1209 08:56:41.691178 39382 kuberuntime_sandbox.go:68] CreatePodSandbox for pod "kube-flannel-ds-arm64-hnsrv_kube-system(2eaafd62-1822-11ea-a1d9-70fd45ac3f1f)" failed: rpc error: code = Unknown desc = failed to start sandbox container for pod "kube-flannel-ds-arm64-hnsrv": Error response from daemon: OCI runtime create failed: systemd cgroup flag passed, but systemd support for managing cgroups is not available: unknown

查看daemon.json文件

因为指定了systemd,导致文件docker 运行镜像失败

cat /etc/docker/daemon.json

{“registry-mirrors”: [“https://registry.docker-cn.co”],

“exec-opts”: [“native.cgroupdriver=systemd”]}去掉

“exec-opts”: [“native.cgroupdriver=systemd”]重启docker 服务

7)子节点不支持kubectl get node

The connection to the server localhost:8080 was refused - did you specify the right host or port?

出现这个问题的原因是kubectl命令需要使用kubernetes-admin来运行,

- 解决方法如下,将主节点中的【/etc/kubernetes/admin.conf】文件拷贝到从节点相同目录下,然后如提示配置环境变量:

- Your Kubernetes control-plane has initialized successfully!

- To start using your cluster, you need to run the following as a regular user:

- mkdir -p $HOME/.kube

- sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

- sudo chown $(id -u):$(id -g) $HOME/.kube/config

- 另外一种解决办法

- echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

- source ~/.bash_profile

总结

- kubernetes作为解耦开发和运维的利器,架构设计超前,部署和使用的过程中会出现各种各样问题。我们要学会从kubelet、node、pod、container结合日志进行分析解决问题。

- 发表于 2021-03-26 01:00

- 阅读 ( 46 )